How much water does AI use, is a question that has been on the minds of many people today and if you are reading this, you are probably already thinking about this question.

Artificial intelligence doesn’t drink water like humans do, but the systems that power it, data centres and the electricity generation behind them, consume very large volumes of water as part of their operation.

This water footprint is both direct and indirect, and it is growing as AI workloads expand globally. So how much water does AI use? Let’s discuss.

1. Why AI Systems Use Water

AI models run on powerful servers housed in data centres. These machines generate enormous amounts of heat when they operate, especially during model training and high-intensity inference (the act of generating responses).

To prevent overheating and hardware failure, data centres use cooling systems, many of which rely on water:

- Evaporative cooling systems circulate water to absorb and remove heat, and much of that water evaporates during the process.

- Power generation water use is also counted in the AI water footprint. Electricity for data centres often comes from thermal power plants that require water for steam cooling.

These two components, direct onsite cooling and indirect water tied to energy generation, together form the real water footprint of AI systems.

2. Water Use at the Data Centre Level

Daily and Facility-Scale Use

According to environmental research and industry reports:

- A typical data centre can use around 300,000 gallons (1.1 million litres) of water per day for cooling, approximately the same as the daily consumption of about 1,000 households.

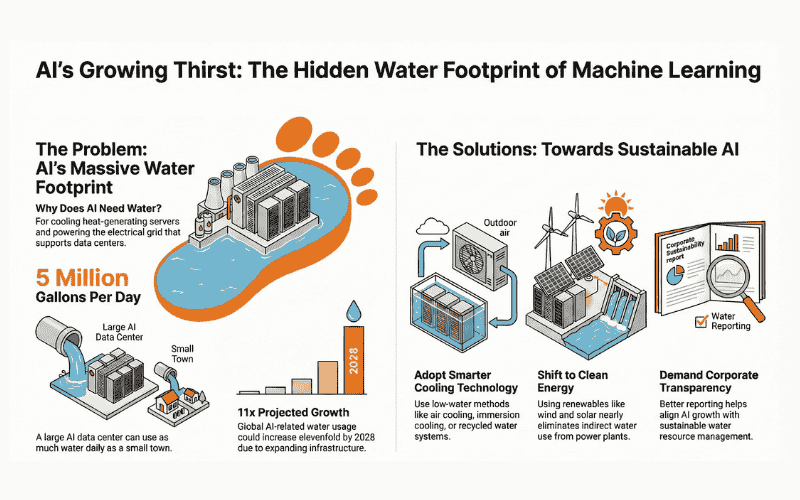

- Larger data centres dedicated to AI workloads can use up to 5 million gallons (19 million litres) per day, comparable to the consumption of a small town of tens of thousands of residents.

These figures include direct water use for cooling hardware and exclude indirect use from electricity generation.

National and Global Figures

- In the United States, data centres consumed roughly 17 billion gallons (64 billion litres) of water in 2023, primarily for cooling infrastructure.

- Projections suggest this could increase dramatically by 2028, potentially reaching 34-68 billion gallons annually in the U.S. alone as AI workloads expand.

On a global scale, some analysts estimate that data centres worldwide currently use around 560 billion litres of water per year, with projected growth to approximately 1,200 billion litres by 2030 as AI infrastructure grows.

Also Read: AI’s Rise Won’t Kill Apps, It Redefines Their Purpose

3. Per-Interaction Water Use

Estimating water use per AI query can help illustrate scale, but these figures vary widely based on model size, data centre efficiency, and regional energy mix:

- A recent model estimates a ChatGPT-type query consumes roughly 0.32 millilitres of water, most of which results indirectly from energy use rather than direct cooling.

- Scaled across billions of daily interactions, even small per-query figures accumulate to millions of litres per day.

Academic research also highlights that training a large model like GPT-3 can require millions of litres of water when accounting for both direct cooling losses and energy-related water use during a training run.

4. What Drives Variability in Water Consumption

Water use varies significantly based on several factors:

Design and Cooling Technology

- Traditional wet cooling systems consume more water than closed-loop or air-based cooling systems.

Location and Climate

- Data centres in hotter, arid regions typically use more water than those in cooler or wetter climates.

Energy Source

- If electricity comes from fossil fuel power plants, indirect water use (for cooling steam cycles) can dominate the footprint. Renewable energy like wind and solar has negligible operational water needs.:

5. Future Trends and Concerns

Rapid Growth in Water Demand

As AI adoption rises, so will water demand:

- Large financial analysts project global AI-related water usage could grow 11x by 2028, driven by expanded data centre capacity and high-intensity AI workloads.

Local Water Stress

In water-scarce regions, new AI facilities can compete with residential and agricultural demands, raising community concerns and prompting calls for stricter resource planning.

Also Read: Apple launches Apple Creator Studio: all‑in‑one creative suite with intelligent features

6. What Can Be Done to Reduce Water Use

Technology and Infrastructure Solutions

- Adoption of low-water cooling technologies such as air cooling, immersion cooling, and recycled water systems can significantly lower consumption.

- Siting data centres in water-secure, moderate climates reduces stress on local supplies.

Energy Decarbonization

- Shifting to renewable energy reduces indirect water use tied to thermal power plant cooling.

Corporate Transparency

- Better reporting of water footprint metrics by tech companies will help planners align AI growth with sustainable resource use.

7. Putting It in Perspective

While AI’s water use is substantial compared with other industries, it still remains a fraction of total global freshwater consumption.

However, because the industry is rapidly expanding and interacts with other resource constraints (like electricity demand and climate impacts), its water footprint is a critical part of future planning for sustainable technology ecosystems.